Business as Usual

The next major breach may not begin with something that looks extraordinary.

By Carlos G. Sháněl, Center for Cybersecurity Studies, Casla Institute

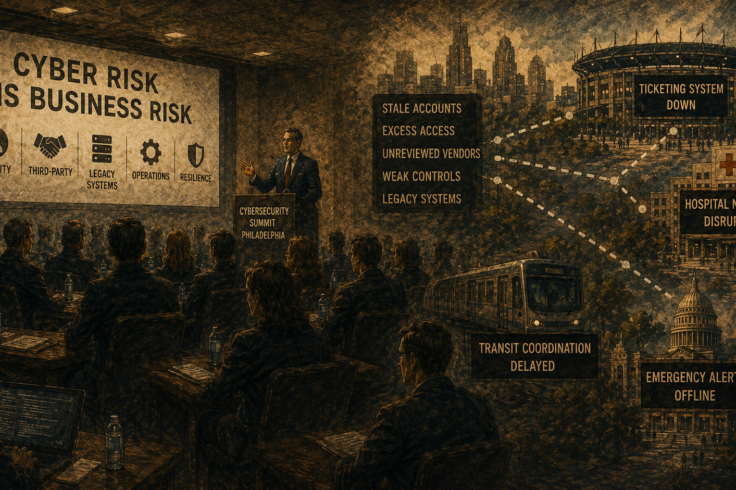

At cybersecurity conferences, the big themes tend to arrive on schedule. Artificial intelligence is changing everything. Threat actors are becoming more sophisticated. The attack surface keeps expanding. All of that was present at the 9th Philadelphia Cybersecurity Summit, where I spent the day among hundreds of security executives, government officials and industry practitioners, and much of it was true. But what stayed with me was something quieter and more unnerving.

The most serious warning I heard in Philadelphia was not about some spectacular future attack. It was about the ordinary, familiar, almost administrative ways that modern breaches begin. A stale account that no one closed. A contractor with more access than necessary. A vendor connection that has not been reviewed in years. A help desk tricked into resetting the wrong credentials. An old system that everyone knows is vulnerable, but nobody can afford to replace.

In other words, the threat that seemed to worry the most experienced people in the room was not chaos. It was normalcy.

That matters because public discussion of cybersecurity still tends to swing between abstraction and spectacle. On one side are lofty conversations about innovation and resilience. On the other are cinematic breach stories, complete with ransomware screens and dark-web villains. What I heard that day was more useful, and more unsettling. Many of today’s attacks do not begin with a dramatic technical breakthrough. They begin with a login.

One of the clearest sessions came from an F.B.I. representative who spoke about cyber risk around major public events. The point was not simply that large gatherings attract malicious attention. It was that cyber incidents now bleed directly into the physical world. Take down a ticketing system, a payment network, a building control platform, a transit coordination tool or an emergency notification channel, and the issue is no longer confined to the I.T. department. It becomes a public-safety problem.

That distinction is easy to miss until someone says it plainly. We have spent years treating cybersecurity as a technical matter that can be delegated downward. But in Philadelphia, the stronger speakers kept returning to a different reality: cyber risk is now inseparable from operations. It touches continuity, logistics, reputation and, in some settings, human safety. If a stadium system fails, if a hospital network stumbles, if a city’s digital coordination tools are disrupted, the consequences do not remain on a screen.

That broader frame also sharpened another point that came up repeatedly: identity has become one of the most important battlegrounds in cybersecurity.

A session from SpecterOps captured this especially well. The speaker argued that many intrusions no longer depend on “hacking in” in the old sense. Attackers often take the easier route. They log in with stolen credentials. They exploit forgotten accounts, inherited privileges and weak contractor controls. They manipulate support functions. They move through systems by abusing trust rather than smashing through defenses.

That struck me because it reframed the problem. Breaches are often described as technical failures. But many of the scenarios discussed in the Philadelphia event sounded more like failures of visibility, discipline and governance. The issue is not always that a system was penetrated by force. Sometimes it is that too many doors were already open, and nobody had a current map.

The summit’s discussions of third-party risk made the same point in a different register. Vendors, software suppliers, managed service providers, healthcare clearinghouses, building systems and remote maintenance tools were all described as potential weak links. One message came through especially clearly: a vendor review is not a permanent assurance. It is a snapshot. Companies change. Products change. Access expands. Staff leaves. Firms merge. A partner that seemed low-risk two years ago may now be one of the least examined paths into your environment.

What made these conversations persuasive was their lack of drama. No one needed to invent a futuristic scenario. The vulnerabilities were mundane and therefore believable. A building system requesting broad access. An H.V.A.C. pathway into a network. A trusted external party retaining privileges longer than necessary. This is the texture of real institutional risk: not glamorous, not theoretical, and often hiding in plain sight.

The sessions on healthcare and legacy systems were similarly grounded. One speaker explained why older systems remain in place even when everyone understands the security problem. In highly regulated sectors, old technologies are often intertwined with medical approvals, budget constraints and essential workflows. They are not simply outdated. They are embedded.

That may be one of the hardest facts for outsiders to absorb. Cybersecurity is not a morality play in which prudent institutions replace vulnerable systems and negligent ones do not. Often the choice is far messier. An insecure system may also be mission critical. So, the work becomes one of containment: isolate it, monitor it, document it, control access around it and hope leadership understands that this is not just a technical inconvenience but an operational vulnerability.

Artificial intelligence hovered over nearly every panel, as expected. Yet here, too, the conference was more sober than fashionable. The strongest conversations avoided both panic and boosterism. Speakers acknowledged that A.I. is already making phishing more persuasive, social engineering more scalable and reconnaissance more efficient. What once required a patient attacker can now be accelerated and refined.

At the same time, security leaders talked about using A.I. themselves for alert triage, summarization and workflow support. The central question was not whether A.I. was coming. The question was whether organizations could govern its use with enough seriousness to understand what data was flowing into which systems, under whose authority, and with what oversight.

Perhaps the most revealing session of the day was one on burnout. It cut through the conference habit of equating more tools with more security. The speaker’s argument was simple: the industry has accumulated too much technology without resolving the underlying operating problem. Teams are buried in alerts, burdened by duplication and asked to process more noise than judgment can reasonably handle.

That diagnosis seemed to tie the whole summit together. Organizations are not only under pressure from attackers. They are under pressure from their own complexity. They are more connected, more dependent on third parties, more reliant on aging systems, more eager to adopt A.I. and more likely to overwhelm their own defenders in the process.

What I heard from speaker after speaker was not that cybersecurity has become impossibly advanced. It was almost the opposite. The fundamentals now matter more because the environment is more fragile. Know who has access. Revisit permissions. Examine vendors continuously, not ceremonially. Segment legacy systems. Exercise response plans. Treat cyber risk as a leadership issue, not a technical silo.

The next major breach may not begin with something that looks extraordinary. It may begin with something that looks routine, even legitimate. That is what made the Philadelphia summit useful. It stripped away some of the industry’s favorite language and returned the issue to its hardest truth.

The most dangerous cyberattacks now often arrive disguised as business as usual.